AI Data Center Power and Cooling: What Engineers Must Know

When an enterprise moves from running inference on a handful of GPUs to operating dense training clusters, the physical infrastructure beneath those workloads stops being a background concern and becomes the primary constraint on performance, reliability, and total cost of ownership. Standard colocation facilities designed for 5–10 kW per rack cannot simply be upgraded with a fan order. The gap between commodity data center design and purpose-built AI data center infrastructure is wide, and closing it mid-deployment is expensive.

This guide gives CTOs and infrastructure engineers a precise look at what AI workloads actually demand from power delivery and cooling systems, where conventional facilities fall short, and what questions to ask any infrastructure partner before committing to a long-term deployment.

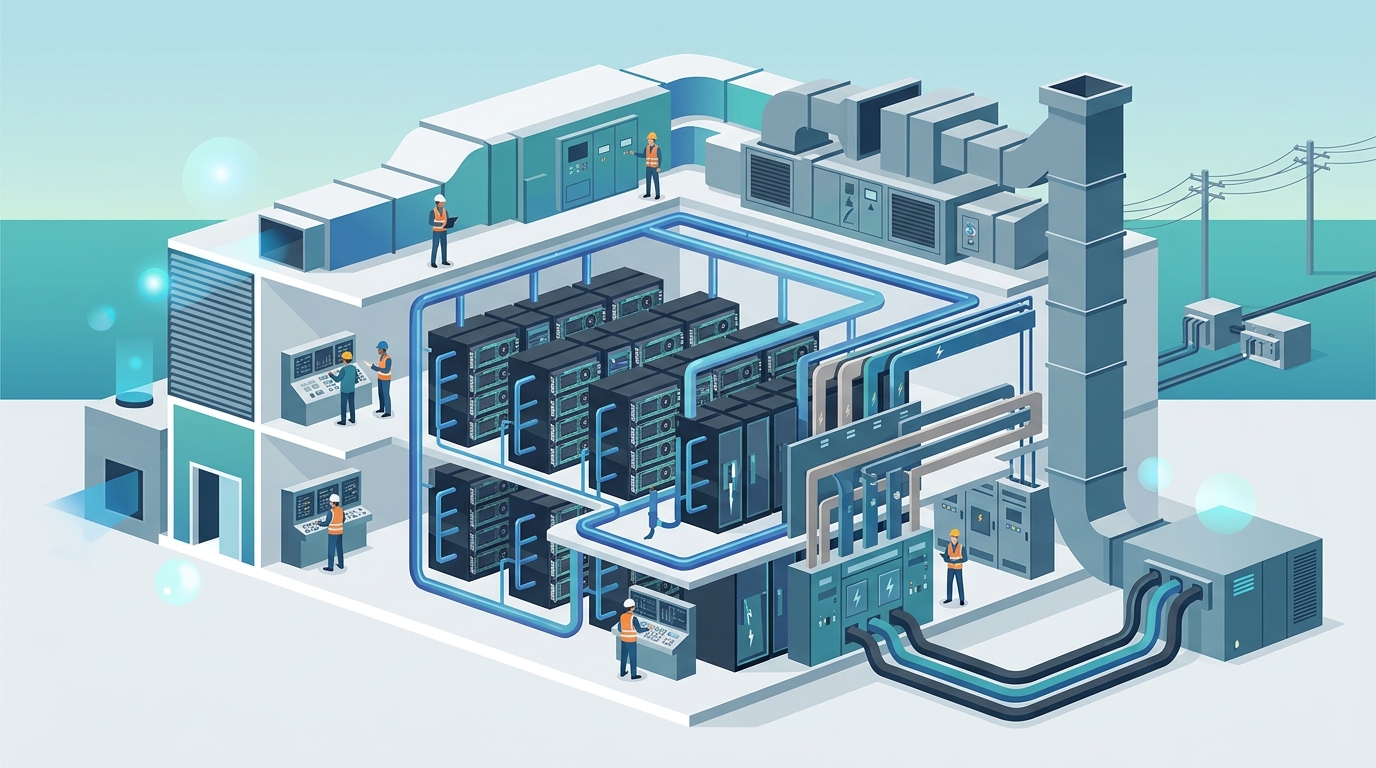

What Is AI Data Center Infrastructure?

AI data center infrastructure refers to the physical systems—power delivery, cooling architecture, network fabric, and structural density—engineered specifically to support GPU and accelerator clusters at sustained, high-utilization loads. Unlike general-purpose compute environments, AI workloads draw power nearly continuously at peak levels. An H100 GPU draws up to 700 W under full training load; a standard 8-GPU server approaches 6.5–7 kW. A single rack holding four such servers already reaches 26–28 kW—two to five times the design ceiling of most enterprise colocation facilities.

Purpose-built AI data center infrastructure accounts for this reality from the ground up: electrical distribution is sized for 40–100+ kW per rack, cooling systems are matched to that thermal density, and redundancy is engineered so that a power or cooling event does not cascade across an entire GPU cluster.

Power Density: The Numbers That Break Standard Facilities

The fundamental problem is watts per square foot. Legacy data centers were designed around storage arrays, network switches, and general-purpose servers. Their power distribution units (PDUs), busways, and UPS systems are sized accordingly. When GPU infrastructure moves into those spaces, engineers hit three hard limits almost immediately.

Purpose-built facilities targeting AI workloads design toward PUE values of 1.2–1.4 and provision electrical infrastructure at the rack level, not as an afterthought retrofit.

Cooling Architecture for High-Density GPU Clusters

Power density and cooling are two sides of the same problem. Every watt consumed by a GPU becomes a watt of heat that must be removed. The three cooling modalities in active use today each carry different performance, cost, and operational implications.

Air Cooling

Raised-floor and overhead air cooling remains the dominant modality in general-purpose data centers. It is cost-effective, well-understood, and requires no changes to server hardware. The ceiling is the constraint: most air-cooled deployments struggle beyond 20–25 kW per rack without hot-aisle containment, precision in-row cooling units, and significant airflow engineering. Attempting to push 60–80 kW racks through air cooling alone produces hotspots, accelerated hardware degradation, and thermal throttling that reduces effective GPU utilization.

Rear-Door Heat Exchangers

Rear-door heat exchangers (RDHx) attach to the back of standard racks and pass chilled water through a coil that absorbs heat from exhaust air before it enters the room. This approach extends the effective density ceiling to roughly 30–45 kW per rack without modifying server hardware and integrates reasonably well into existing facilities. RDHx systems are a sensible bridge technology for organizations upgrading legacy spaces, but they are still bounded by the rack's exhaust temperature and airflow rate.

Direct Liquid Cooling

Direct liquid cooling (DLC)—whether through cold plates attached to GPU heat spreaders or full immersion in dielectric fluid—removes heat at the source rather than managing it after it has entered the ambient air. Cold-plate DLC supports rack densities of 80–120 kW and above, reduces dependence on room-level cooling infrastructure, and can achieve PUE figures close to 1.1 in optimized deployments. The tradeoff is hardware compatibility: servers must be designed or modified for liquid cooling, and the facility must provision chilled water loops to each rack row.

For any GPU cluster running sustained training workloads at scale, direct liquid cooling is not a premium option—it is the architecture that matches the thermal profile of the hardware.

Redundancy and Reliability: What AI Workloads Require

A distributed training job running across 512 GPUs can represent days of compute time and significant cost. A power or cooling failure that takes down even one node mid-run typically requires restarting from the last checkpoint, wasting hours of accelerator time. This changes the reliability calculus compared to general-purpose workloads.

Infrastructure engineers should evaluate any colocation or managed AI infrastructure provider against the following redundancy tiers:

Tier III certification from the Uptime Institute guarantees 99.982% availability through concurrent maintainability, but AI workloads often warrant reviewing the specific power and cooling architecture rather than relying solely on tier classification.

TCO Implications: Retrofitted vs. Purpose-Built Facilities

The total cost of ownership gap between deploying AI infrastructure in a retrofitted commodity facility versus a purpose-built environment is frequently underestimated at procurement time and becomes apparent over the first 12–18 months of operation.

Retrofitted facilities accumulate hidden costs in several categories: electrical upgrade capital expenditure, cooling efficiency penalties reflected in monthly power bills, thermal throttling that reduces effective GPU utilization below the level assumed in capacity planning, and unplanned downtime events requiring job restarts. A conservative estimate places these hidden costs at 15–30% of annual infrastructure spend for high-density GPU deployments in under-provisioned facilities.

Purpose-built AI data center infrastructure absorbs those costs into the design phase, delivering predictable operating expenses, higher sustained GPU utilization, and power SLAs that can be written into service agreements.

Key Questions to Ask Any AI Infrastructure Partner

When evaluating colocation or managed infrastructure providers for GPU clusters, engineers should request direct answers to the following:

Frequently Asked Questions

What power density should I plan for a GPU training cluster?

Plan for a minimum of 40 kW per rack for current-generation GPU servers running sustained training workloads, and size for 80–100 kW per rack if you anticipate deploying next-generation accelerators or increasing rack density over the infrastructure contract term.

Is air cooling viable for AI data center infrastructure?

Air cooling can support modest GPU deployments up to approximately 20–25 kW per rack with proper containment. Beyond that threshold, thermal throttling reduces GPU utilization and accelerates hardware wear. For dense training clusters, rear-door heat exchangers or direct liquid cooling are necessary.

How does PUE affect AI workload operating costs?

At meaningful GPU cluster scale, PUE has a direct and linear effect on the power component of your monthly infrastructure cost. Moving from a PUE of 1.8 to 1.3 reduces overhead power spend by roughly 28% on the same compute load—a material savings across a multi-year deployment.

What is the difference between cold-plate DLC and immersion cooling?

Cold-plate DLC circulates chilled water through a metal plate mounted directly on the GPU heat spreader, removing heat conductively without modifying the surrounding server chassis significantly. Immersion cooling submerges the entire server in a dielectric fluid bath. Both achieve similar thermal performance at high densities; cold-plate DLC is more broadly compatible with standard server hardware and is currently the more common choice for new AI data center builds.

Key Takeaways

Talk to OneSource Cloud About Purpose-Built AI Infrastructure

OneSource Cloud designs and operates infrastructure built specifically for GPU cluster workloads—not retrofitted from general-purpose colocation. Our facilities are engineered for the power densities and cooling requirements that modern AI deployments demand, with contractual power SLAs and PUE commitments that reflect real operating conditions.

If you are evaluating infrastructure options for a GPU cluster deployment or assessing whether your current facility can support your AI roadmap, contact our infrastructure team for a technical consultation. You can also schedule a 30-minute call to discuss your specific power, cooling, and redundancy requirements with an engineer who works on these problems daily.

.svg)